There's no shortage of AI ambition right now. What's in short supply is organizations that have the data foundation, the governance, and the practical know-how to make AI actually deliver. That's where Macula comes in. We've helped enterprise organizations across healthcare, finance, retail, and manufacturing turn AI from a boardroom priority into a production reality.

Before we can run, we need to walk. And in AI, walking means having data that's trusted, governed, and organized enough to train on, query against, or make ready for an agent. The organizations that struggle with AI either have an underlying data challenge or yet to define business context to realize AI's impact.

If that sounds familiar, our Data Governance practice is a great starting point. Once the foundation is solid, the AI work gets dramatically faster and more reliable. And if you need to understand context gaps, our Macula Blaze solution builds the business context layer that gives your AI something meaningful to work with.

Here's what AI readiness looks like in practice:

Build your AI-ready foundation faster with Macula Blaze MDP — built-in business context, governance, and AI-readiness from day one.

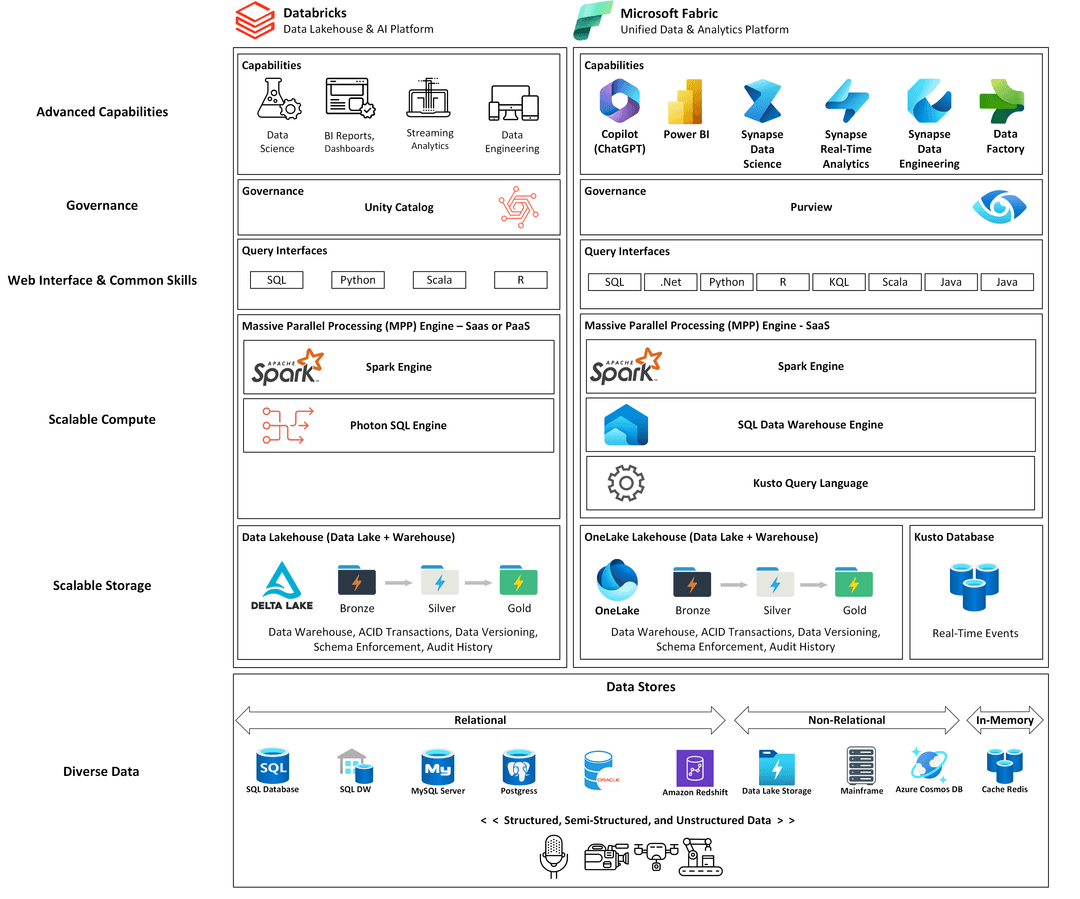

AI needs a home. Macula's cloud platform practice gets you onto the right foundation quickly — Databricks or Microsoft Fabric, depending on your workloads, your team, and where you're headed. We don't pitch you on a platform and walk away. We architect it to your needs, build it with your team, and make sure it can carry the weight of your AI ambitions from day one. As Lakehouse experts, we specialize on these platforms because they excel in both data warehousing AND AI — you don't have to choose.

What a Macula-built AI platform delivers:

Ready to build faster? See how Macula Blaze MDP gets your AI-ready platform running in weeks, not quarters.

The GenAI conversation has moved well past "can we use ChatGPT for this?" The real enterprise opportunity is agentic AI — systems that reason, plan, and take action across your data and workflows. We've been building these systems and we know where the sharp edges are, what is real vs hype, and how to deploy responsibly.

Macula helps organizations deploy enterprise AI responsibly — private LLM instances grounded in your data, retrieval-augmented generation (RAG) pipelines that stay current, and agentic workflows that automate where it makes sense and keep humans in the loop where it doesn't.

Explore our Viewpoint blog for practical perspectives on enterprise AI — what works, what doesn't, and what to watch.

Enterprise LLM deployment — your models, your data, your infrastructure. Private LLM instances that run against proprietary data without sending sensitive context to the public cloud. Your IP stays yours.

RAG architecture — retrieval pipelines that give models access to your current, trusted enterprise content. No confident hallucinations from stale training data.